作者:櫰木

hive_Kerberos_1">1 创建hive Kerberos主体

bash /root/bigdata/getkeytabs.sh /etc/security/keytab/hive.keytab hive

2 安装

在hd1.dtstack.com主机root权限下操作:

- 解压包

[root@hd3.dtstack.com software]# tar -zxvf apache-hive-3.1.2-bin.tar.gz -C /opt

ln -s /opt/apache-hive-3.1.2-bin /opt/hive

- 设置环境变量

[root@hd3.dtstack.com software]# source /etc/profile

- 修改hive-env.sh

[root@hd3.dtstack.com conf]# cd /opt/apache-hive-3.1.2-bin/conf

[root@hd3.dtstack.com conf]# cat >hive-env.sh<<EOF

export HADOOP_HOME=/opt/hadoop

export HIVE_HOME=/opt/hive

export HIVE_CONF_DIR=/opt/hive/conf

if [ "$SERVICE" = "hiveserver2" ] ; then

HADOOP_CLIENT_OPTS="$HADOOP_CLIENT_OPTS -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dcom.sun.management.jmxremote.local.only=false -Dcom.sun.management.jmxremote.port=9611 -javaagent:/opt/prometheus/jmx_prometheus_javaagent-0.3.1.jar=9511:/opt/prometheus/hiveserver2.yml"

fi

if [ "$SERVICE" = "metastore" ] ; then

HADOOP_CLIENT_OPTS="$HADOOP_CLIENT_OPTS -Dcom.sun.management.jmxremote.authenticate=false -Dcom.sun.management.jmxremote.ssl=false -Dcom.sun.management.jmxremote.local.only=false -Dcom.sun.management.jmxremote.port=9606 -javaagent:/opt/prometheus/jmx_prometheus_javaagent-0.3.1.jar=9506:/opt/prometheus/hive_metastore.yml"

fi

TEZ_CONF_DIR=/opt/tez/conf/tez-site.xml

TEZ_JARS=/opt/tez

EOF

- 修改hive-site.xml(含kerberos配置)

[root@hd1.dtstack.com conf]# cat >hive-site.xml<<EOF

<?xml version="1.0" encoding="UTF-8" standalone="no"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>javax.jdo.option.ConnectionURL</name>

<value>jdbc:mysql://hd1.dtstack.com:3306/metastore?allowPublicKeyRetrieval=true</value>

</property>

<property>

<name>hive.cluster.delegation.token.store.class</name>

<value>org.apache.hadoop.hive.thrift.DBTokenStore</value>

<description>Hive defaults to MemoryTokenStore, or ZooKeeperTokenStore</description>

</property>

<property>

<name>javax.jdo.option.ConnectionDriverName</name>

<value>com.mysql.jdbc.Driver</value>

</property>

<property>

<name>javax.jdo.option.ConnectionUserName</name>

<value>hive</value>

</property>

<property>

<name>javax.jdo.option.ConnectionPassword</name>

<value>123456</value>

</property>

<property>

<name>hive.metastore.warehouse.dir</name>

<value>/user/hive/warehouse</value>

</property>

<property>

<name>hive.metastore.thrift.impersonation.enabled</name>

<value>false</value>

</property>

<property>

<name>hive.exec.scratchdir</name>

<value>/user/hive/warehouse</value>

</property>

<property>

<name>hive.reloadable.aux.jars.path</name>

<value>/user/hive/udf</value>

</property>

<property>

<name>hive.metastore.schema.verification</name>

<value>false</value>

</property>

<property>

<name>hive.exec.dynamic.partition</name>

<value>true</value>

</property>

<property>

<name>hive.exec.dynamic.partition.mode</name>

<value>nonstrict</value>

</property>

<property>

<name>hive.server2.thrift.port</name>

<value>10000</value>

</property>

<property>

<name>hive.server2.webui.host</name>

<value>0.0.0.0</value>

</property>

<property>

<name>hive.server2.webui.port</name>

<value>10002</value>

</property>

<property>

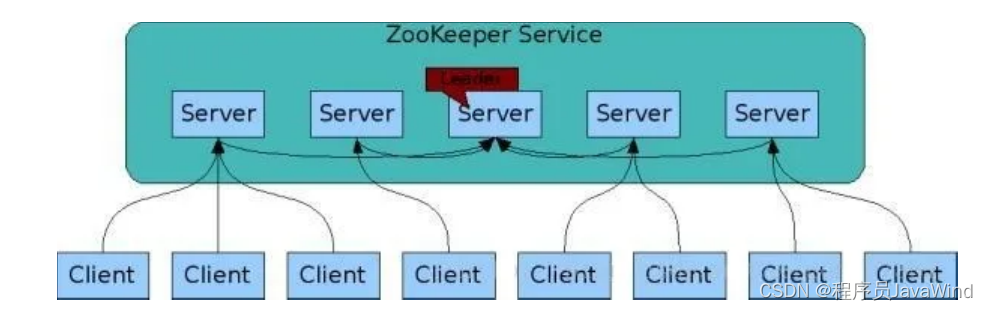

<name>hive.server2.support.dynamic.service.discovery</name>

<value>true</value>

</property>

<property>

<name>hive.zookeeper.quorum</name>

<value>hd1.dtstack.com:2181,hd3.dtstack.com:2181,hd2.dtstack.com:2181</value>

</property>

<property>

<name>hive.server2.thrift.min.worker.threads</name>

<value>300</value>

</property>

<property>

<name>hive.server2.async.exec.threads</name>

<value>200</value>

</property>

<property>

<name>hive.server2.idle.session.timeout</name>

<value>3600000</value>

</property>

<property>

<name>hive.server2.session.check.interval</name>

<value>60000</value>

</property>

<property>

<name>hive.server2.enable.doAs</name>

<value>false</value>

</property>

<property>

<name>hive.merge.mapfile</name>

<value>true</value>

</property>

<property>

<name>hive.merge.size.per.task</name>

<value>256000000</value>

</property>

<property>

<name>hive.mapjoin.localtask.max.memory.usage</name>

<value>0.9</value>

</property>

<property>

<name>hive.mapjoin.smalltable.filesize</name>

<value>25000000L</value>

</property>

<property>

<name>hive.mapjoin.followby.gby.localtask.max.memory.usage</name>

<value>0.55</value>

</property>

<property>

<name>hive.merge.mapredfiles</name>

<value>false</value>

</property>

<property>

<name>hive.exec.max.dynamic.partitions.pernode</name>

<value>100</value>

</property>

<property>

<name>hive.exec.max.dynamic.partitions</name>

<value>1000</value>

</property>

<property>

<name>hive.metastore.server.max.threads</name>

<value>100000</value>

</property>

<property>

<name>hive.metastore.server.min.threads</name>

<value>200</value>

</property>

<property>

<name>mapred.reduce.tasks</name>

<value>-1</value>

</property>

<property>

<name>hive.exec.reducers.bytes.per.reducer</name>

<value>64000000</value>

</property>

<property>

<name>hive.exec.reducers.max</name>

<value>1099</value>

</property>

<property>

<name>hive.auto.convert.join.noconditionaltask.size</name>

<value>20000000</value>

</property>

<property>

<name>spark.executor.cores</name>

<value>4</value>

</property>

<property>

<name>spark.executor.memory</name>

<value>456340275B</value>

</property>

<property>

<name>spark.driver.memory</name>

<value>966367641B</value>

</property>

<property>

<name>spark.yarn.driver.memoryOverhead</name>

<value>102000000</value>

</property>

<property>

<name>spark.yarn.executor.memoryOverhead</name>

<value>76000000</value>

</property>

<property>

<name>hive.map.aggr</name>

<value>true</value>

</property>

<property>

<name>hive.map.aggr.hash.percentmemory</name>

<value>0.5</value>

</property>

<property>

<name>hive.merge.sparkfiles</name>

<value>false</value>

</property>

<property>

<name>hive.merge.smallfiles.avgsize</name>

<value>16000000</value>

</property>

<property>

<name>hive.fetch.task.conversion</name>

<value>minimal</value>

</property>

<property>

<name>hive.fetch.task.conversion.threshold</name>

<value>32000000</value>

</property>

<property>

<name>hive.metastore.client.socket.timeout</name>

<value>600s</value>

</property>

<property>

<name>hive.server2.idle.operation.timeout</name>

<value>6h</value>

</property>

<property>

<name>hive.server2.idle.session.timeout</name>

<value>3600000</value>

</property>

<property>

<name>hive.server2.idle.session.check.operation</name>

<value>true</value>

</property>

<property>

<name>hive.server2.webui.max.threads</name>

<value>50</value>

</property>

<property>

<name>hive.metastore.connect.retries</name>

<value>10</value>

</property>

<property>

<name>hive.warehouse.subdir.inherit.perms</name>

<value>false</value>

</property>

<property>

<name>hive.metastore.event.db.notification.api.auth</name>

<value>false</value>

</property>

<property>

<name>hive.stats.autogather</name>

<value>false</value>

</property>

<property>

<name>hive.server2.active.passive.ha.enable</name>

<value>true</value>-->

</property>

<property>

<name>hive.execution.engine</name>

<value>tez</value>

</property>

<property>

<name>hive.metastore.uris</name>

<value>thrift://hd1.dtstack.com:9083</value>

<description>A comma separated list of metastore uris on which metastore service is running</description>

</property>

<!-- hive开启kerberos -->

<!-- hivemetastore conf -->

<property>

<name>hive.metastore.sasl.enabled</name>

<value>true</value>

</property>

<property>

<name>hive.server2.thrift.sasl.qop</name>

<value>auth</value>

</property>

<property>

<name>hive.metastore.kerberos.keytab.file</name>

<value>/etc/security/keytab/hive.keytab</value>

</property>

<property>

<name>hive.metastore.kerberos.principal</name>

<value>hive/_HOST@DTSTACK.COM</value>

</property>

<property>

<name>hive.server2.authentication</name>

<value>kerberos</value>

</property>

<!-- hiveserver2 conf -->

<property>

<name>hive.security.metastore.authenticator.manager</name>

<value>org.apache.hadoop.hive.ql.security.HadoopDefaultMetastoreAuthenticator</value>

</property>

<property>

<name>hive.security.metastore.authorization.auth.reads</name>

<value>true</value>

</property>

<property>

<name>hive.security.metastore.authorization.manager</name>

<value>org.apache.hadoop.hive.ql.security.authorization.StorageBasedAuthorizationProvider</value>

</property>

<property>

<name>hive.server2.allow.user.substitution</name>

<value>true</value>

</property>

<property>

<name>hive.metastore.pre.event.listeners</name>

<value>org.apache.hadoop.hive.ql.security.authorization.AuthorizationPreEventListener</value>

</property>

<property>

<name>hive.server2.authentication.kerberos.principal</name>

<value>hive/_HOST@DTSTACK.COM</value>

</property>

<property>

<name>hive.server2.authentication.kerberos.keytab</name>

<value>/etc/security/keytab/hive.keytab</value>

</property>

<property>

<name>hive.server2.zookeeper.namespace</name>

<value>hiveserver2</value>

</property>

</configuration>

EOF

- 创建hdfs相关目录

[root@hd3.dtstack.com conf]# hdfs dfs -mkdir -p /user/hive/warehouse

[root@hd3.dtstack.com conf]# hdfs dfs -mkdir /tmp

[root@hd3.dtstack.com conf]# hdfs dfs -chmod g+w /tmp /user/hive/warehouse

[root@hd3.dtstack.com conf]# hdfs dfs -chmod 777 /user/hive/warehouse

- 添加驱动

[root@hd3.dtstack.com conf]# cp /usr/share/java/mysql-connector-java.jar /opt/apache-hive-3.1.2-bin/lib

[root@hd3.dtstack.com conf]# cp /opt/hadoop/share/hadoop/common/lib/guava-27.0-jre.jar /opt/apache-hive-3.1.2-bin/lib

[root@hd3.dtstack.com conf]# chown -R hive:hadoop /opt/apache-hive-3.1.2-bin

hive_415">3 hive初始化

- 在hd1.dtstack.com主机上创建hive元数据库

mysql> create database metastore;

create user 'hive'@'%' identified by '123456';

grant all privileges on metastore.* to 'hive'@'%' ;

说明:

[root@hd3.dtstack.com conf]# cd /opt/apache-hive-3.1.2-bin/bin

[root@hd3.dtstack.com bin]# schematool -dbType mysql -initSchema hive 123456

- 在hd1.dtstack.com主机上修改core-site.xml

修改$HADOOP_HOME/etc/hadoop/core-site.xml文件,增加如下内容:

<property>

<name>hadoop.proxyuser.hive.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hive.groups</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hive.users</name>

<value>*</value>

</property>

说明:在hadoop集群安装过程中已经提前预配,本步骤可省略

- 在hd1.dtstack.com上重启hadoop集群

[hdfs@hd1.dtstack.com ~]$stop-yarn.sh

[hdfs@hd1.dtstack.com ~]$stop-dfs.sh

[hdfs@hd1.dtstack.com ~]$start-dfs.sh

[hdfs@hd1.dtstack.com ~]$start-yarn.sh

hive__470">4 hive 环境变量配置(在前置中已配置可忽略)

在/etc/profile中加入hadoop集群环境变量

#hive

export HIVE_HOME=/opt/apache-hive-3.1.2-bin

export PATH=$PATH:$HIVE_HOME/bin

5 安装tez(每个节点都需安装)

Hive3.x版本默认支持tez,需要添加tez依赖

解压

cd /opt/bigdata

tar -xzvf apache-tez-0.10.2-bin.tar.gz -C /opt

ln -s /opt/apache-tez-0.10.2-bin /opt/tez

创建hdfs的tez目录,

hdfs dfs -mkdir /tez

cd /opt/tez/share

hdfs dfs -put tez.tar.gz /tez

cd /opt/tez/conf/

配置tez-site.xml

<configuration>

<property>

<name>tez.lib.uris</name>

<value>${fs.defaultFS}/tez/tez.tar.gz</value>

</property>

<property>

<name>tez.use.cluster.hadoop-libs</name>

<value>true</value>

</property>

<property>

<name>tez.history.logging.service.class</name>

<value>org.apache.tez.dag.history.logging.ats.ATSHistoryLoggingService</value>

</property>

<property>

<name>tez.use.cluster.hadoop-libs</name>

<value>true</value>

</property>

<property>

<name>tez.am.resource.memory.mb</name>

<value>2048</value>

</property>

<property>

<name>tez.am.resource.cpu.vcores</name>

<value>1</value>

</property>

<property>

<name>hive.tez.container.size</name>

<value>2048</value>

</property>

<property>

<name>tez.container.max.java.heap.fraction</name>

<value>0.4</value>

</property>

<property>

<name>tez.task.resource.memory.mb</name>

<value>1024</value>

</property>

<property>

<name>tez.task.resource.cpu.vcores</name>

<value>1</value>

</property>

<property>

<name>tez.runtime.compress</name>

<value>true</value>

</property>

<property>

<name>tez.runtime.compress.codec</name>

<value>org.apache.hadoop.io.compress.SnappyCodec</value>

</property>

</configuration>

分发tez-site.xml到hadoop和hive的conf路径下

cp conf/tez-site.xml /opt/hive/conf

cp conf/tez-site.xml /opt/hadoop/etc/hadoop

拷贝tez的lib包到hive的lib目录下

cp /opt/tez/lib/* /opt/hive/lib

hive_560">6 hive启动

- 创建启停脚本(hd1.dtstack.com)

cd /opt/hive/bin

cat >start_hive.sh <<EOF

#!/bin/sh

/opt/apache-hive-3.1.2-bin/bin/hive --service metastore>/opt/apache-hive-3.1.2-bin/log/metastore.log 2>&1 &

/opt/apache-hive-3.1.2-bin/bin/hive --service hiveserver2>/opt/apache-hive-3.1.2-bin/log/hiveserver.log 2>&1 &

EOF

cat >stop_hive.sh <<EOF

#!/bin/sh

ps -ef|grep hive|grep -v grep|awk '{print \$2}'|xargs kill -9

EOF

- hive启动

chown -R hive:hadoop apache-hive-3.1.2-bin apache-tez-0.10.2-bin

chmod -R 755 /opt/apache-hive-3.1.2-bin /opt/apache-tez-0.10.2-bin

sh start.sh

[root@hadoop05 apache-hive-3.1.2-bin]# sh start_hive.sh

检查端口

启动之后,检查9083端口和10000端口是否正常

ss -tunlp | grep 9083

ss -tunlp | grep 10000

使用beeline -u命令进行登陆测试

beeline -u 'jdbc:hive2://hd1.dtstack.com:10000/default;principal=hive/hd1.dtstack.com@DTSTACK.COM'

更多技术信息请查看云掣官网https://yunche.pro/?t=yrgw